Why I Rewrote azd-app's Transport Layer with Connect-RPC

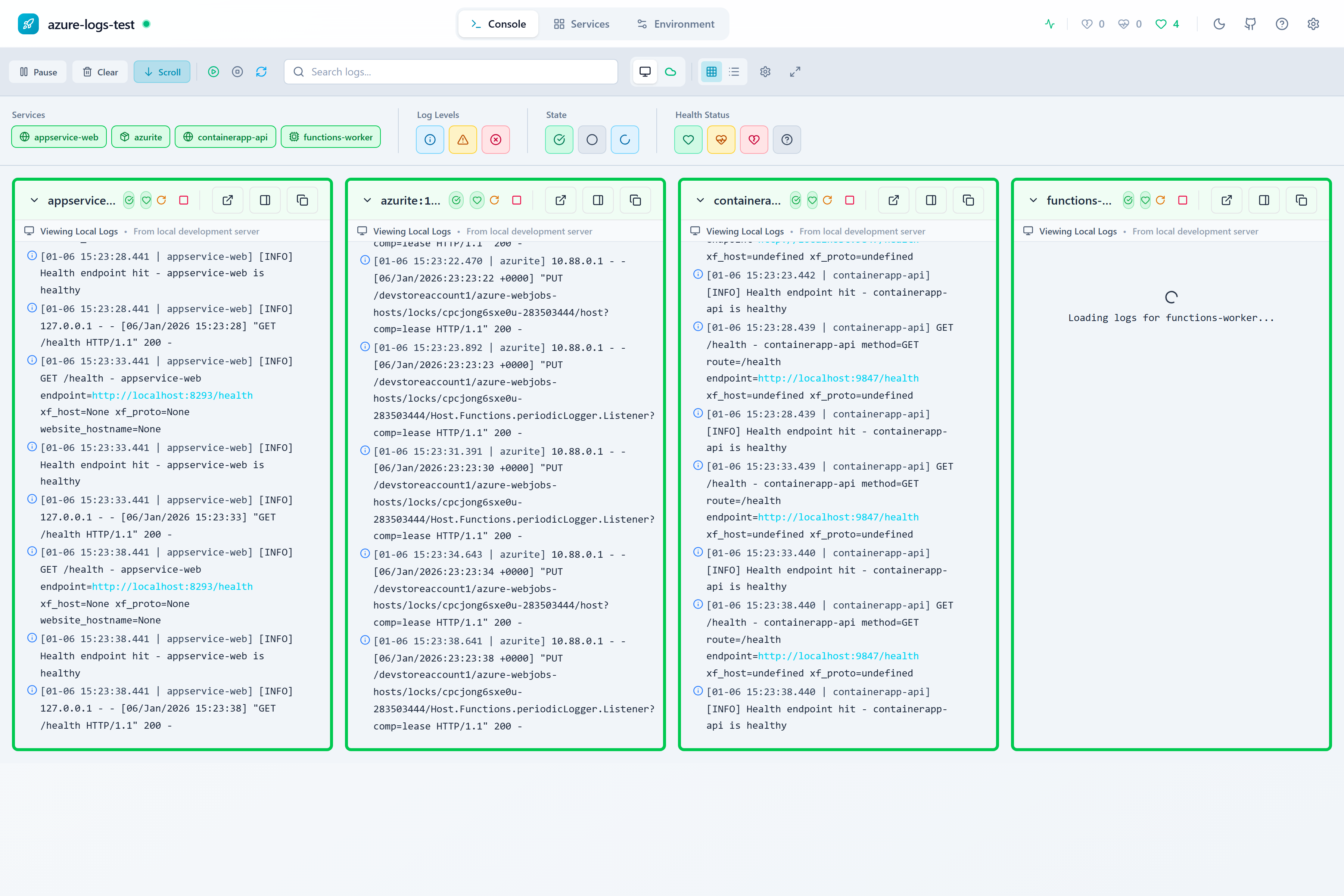

I’ve been building azd-app - a local app runner and observability platform for Azure Developer CLI projects. It starts all your services, streams logs, runs health checks, and gives you a browser dashboard and MCP tools for AI agents. It’s distributed as an azd extension, so you just run azd app run and everything spins up.

I spent the last few months ripping out 28 hand-written REST endpoints and 5 WebSocket streams and replacing them with Connect-RPC. Here’s what happened, what broke along the way, and where this is all heading.

The four-consumer problem

azd-app has four consumers that all need the same data:

- CLI commands -

azd app logs,azd app health,azd app info- these are cobra commands that originally called Go functions directly - Browser dashboard - a React SPA embedded in the Go binary that was hitting REST endpoints and WebSocket streams

- MCP server - 12 tools that AI agents (Copilot, Claude) use to interact with your running services

- Future TUI - a Bubble Tea terminal UI I want to add to

azd app run

Each consumer was implementing its own version of the same operations. The REST handlers had hand-written JSON marshalling. The dashboard had TypeScript fetchers with duplicated types. The MCP tools re-derived the contracts independently. And there wasn’t any compile-time guarantee that they agreed on the shape of the data.

Every time I added a field to a response, I had to update it in three places. And I’d inevitably miss one. The MCP tools started drifting from what the dashboard expected. Adding a TUI would’ve meant building a fourth hand-rolled client.

I’d been putting off dealing with this for too long. Something had to change.

Why Connect-RPC and not plain gRPC

I looked at four options:

Raw gRPC was my first instinct, but it’s browser-hostile. You need a gRPC-Web proxy to talk to it from a browser, and server streaming doesn’t work cleanly without trailer support. My dashboard is embedded in the Go binary - I wasn’t about to add a proxy just to serve my own SPA.

tRPC is great for TypeScript projects, but azd-app is Go-rooted. Going TypeScript-only for the schema would’ve been swimming upstream.

OpenAPI/REST with generated clients was an option, but streaming is a bolt-on (SSE at best). I had 5 real-time streams that needed first-class support.

Connect-RPC hit the sweet spot. It speaks gRPC, gRPC-Web, and the Connect protocol on the same handler. The browser dashboard uses @connectrpc/connect-web with native fetch - no proxy needed. The CLI uses the Go client. The MCP tools use the same Go client. And a future TUI will use it too. One proto schema, one codegen step, four typed clients.

The migration: 8 services, 31 RPCs

I defined 9 proto files in proto/azdapp/v1/ that capture the full API surface:

| Service | What it does |

|---|---|

LifecycleService | Ping, environment info, broadcast stream |

ProjectService | Project metadata |

ServicesService | Start, stop, restart, list services |

LogsService | Log retrieval, real-time streaming, classifications, preferences |

HealthService | Health checks, streaming health updates, state transitions |

ModeService | Connection mode (local vs Azure) |

AzureService | 14 RPCs for Log Analytics, diagnostics, setup state, Azure log streaming |

BicepService | Bicep template generation |

buf generates the Go and TypeScript stubs. The dashboard imports from src/gen/proto/azdapp/v1/, the CLI imports from cli/src/gen/proto/azdapp/v1/. Same schema, same types, no drift.

What I learned the hard way

Import extensions matter more than you’d think

Here’s the kind of thing that’ll eat an afternoon. The TypeScript codegen was producing imports like ./lifecycle_pbjs instead of ./lifecycle_pb.js. Turns out protoc-gen-es treats the import_extension parameter as a literal string to append. If you write import_extension=js, you get pbjs. You need import_extension=.js - with the dot. It’s a tiny thing, but it broke the entire dashboard build and I spent way too long staring at it before I figured it out.

HTTP/1 streaming can deadlock in tests

I hit a nasty deadlock when testing Connect server-streaming over HTTP/1. The header flush step would hang because the test was waiting for the stream to start before sending the stimulus that would cause the stream to emit. I fixed it by driving the stimulus from a goroutine so the stream could flush its headers while the test was already listening.

Back-pressure isn’t optional for streaming

With 5 different server streams, I had to think carefully about what happens when a consumer falls behind. Not all streams are equal:

| Stream | Policy | Why |

|---|---|---|

StreamLocalLogs | Drop oldest, bounded ring | Logs are high-volume, losing old ones is fine |

StreamAzureLogs (polling) | Block producer with backoff | Azure Log Analytics queries cost money |

StreamAzureLogs (realtime) | Drop oldest, emit notice | Same as local but notify the client |

StreamHealth | Last-value-wins | Only the latest health state matters |

StreamBroadcast | Drop oldest, disconnect slow consumers | UI hints are best-effort |

StreamStateTransitions | Block producer, 256 buffer | State changes are critical - can’t drop them |

I documented each policy directly in the proto RPC comments so the next person (or future me) doesn’t have to guess.

Channel close isn’t a shutdown signal

I had a race condition in the broadcast manager where I was using channel close as both “we’re done sending” and “shut everything down.” Those are two different things. The fix: channel close means shutdown, ctx.Done() means slow-consumer disconnect. Sounds obvious in hindsight, but it caused intermittent test failures that were maddening to track down.

Resist the urge to refactor everything at once

I was tempted to extract service interfaces while doing the transport swap. Cleaner testability, better separation of concerns - all good things. But here’s the thing: extracting interfaces around concrete types is orthogonal to swapping the transport layer. I explicitly deferred it to keep the migration PRs focused. Scope creep during a migration like this is how you end up with a 3-month PR that nobody can review.

When the proto doesn’t match reality, rewrite the proto

My initial AzureService proto was designed based on what I thought the handlers returned. When I started wiring them up, I found the actual response shapes had drifted from my mental model. I had to stop and do a complete one-shot rewrite of the AzureService proto to match what the Go handlers actually produced. Here’s the lesson: proto-first design is great, but verify against the real code before you commit to it.

The browser dashboard is already on Connect

This wasn’t a separate “expand to browser” step - the Connect migration is the browser migration. All the dashboard hooks now use Connect-ES instead of raw fetch and WebSocket:

useProjecttalks toProjectService.GetProjectuseHealthStreamusesHealthService.StreamHealth(server streaming, replacing WebSocket)useLogStreamusesLogsService.StreamLocalLogs(replacing another WebSocket)useAzureConnectionStatususesModeService.GetMode/SetMode

One nice side effect: testing got way simpler. The old hooks needed global fetch mocking with carefully ordered response queues. Now they use createRouterTransport - an in-memory Connect transport. One test suite went from 501 lines of fetch mocking down to 15 clean tests.

What’s next: TUI and azd core

Bubble Tea TUI

I want to add a Bubble Tea TUI to azd app run --tui - a multi-panel terminal view of your running services, logs, and health. The whole point of the Connect migration was to make this easy. The TUI will consume the exact same RPC endpoints as the dashboard and CLI. No new data layer work needed. It’s just another presentation surface on top of the same proto schema.

The azd extension framework already added interactive extension support, so the plumbing’s there. I just need to build the panels and keybindings.

Integration into azd core

There’s an active EPIC in the Azure Developer CLI repo (azure-dev#7681) about building a “local view of your app and resources.” The discussion initially characterized azd-app as “just a browser dashboard,” but that’s not what it is. It’s a local observability platform with three first-class surfaces (CLI, browser, MCP) and a fourth on the way (TUI).

I wrote a local-view-architecture spec that makes the case for adopting azd-app as the data layer for this EPIC. The architecture already hits every priority the EPIC laid out - terminal-native snapshots, browser dashboard, agent-consumable MCP tools. The Connect-RPC migration was partly motivated by this - having a clean, schema-driven API surface makes it realistic to propose azd-app as a foundation rather than a standalone extension.

Whether that happens or not, the migration was worth it on its own. I went from maintaining three divergent implementations of the same API to one proto schema that generates everything. Adding a new endpoint now means writing one proto definition, one Go handler, and running buf generate. The TypeScript client, the MCP tool types, and the CLI client all come for free.

I should’ve done this a year ago.